IterNoise

The Butterfly Effect in Psychiatry is a matching title of a scientific article, that explains via a case study how small events or hidden traumatic experiences can change mental health of a person, in which auditory hallucinations are common symptoms, e.g. schizophrenia.

This interactive sculpture addresses causes of auditory delusions through internal and external events (naturally its combination), that can be separated into 3 different scenarios:

- Perceiving sounds without auditory stimulus

- Misinterpretation of other peoples real voices/conversations

- Respond to peoples negative attitude towards an individual with mental health problems

The sculpture represents brain wave activities and auditory memories through generated synthetic sounds and beats regarding the representing dominant virtual brainwave triggered by an analysis of gray scales in each video frame. The auditory memories are represented by audio samples taken just before the motors are activated, thus changing the resulting video image by altering the camera position.

UPGRADE 06/18:

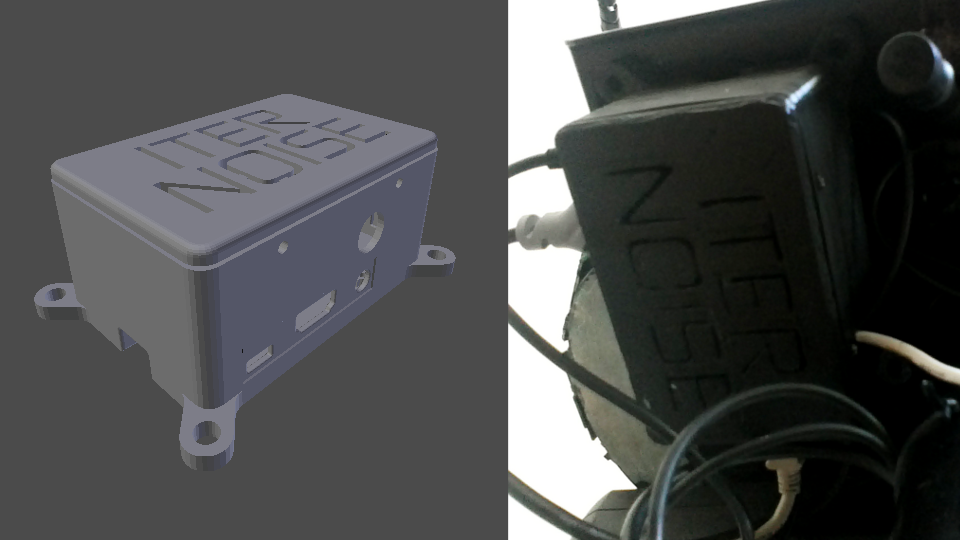

3 major upgrades of hardware have been made to IterNoise

- Replacement of old TV tube with 10 Flatscreen

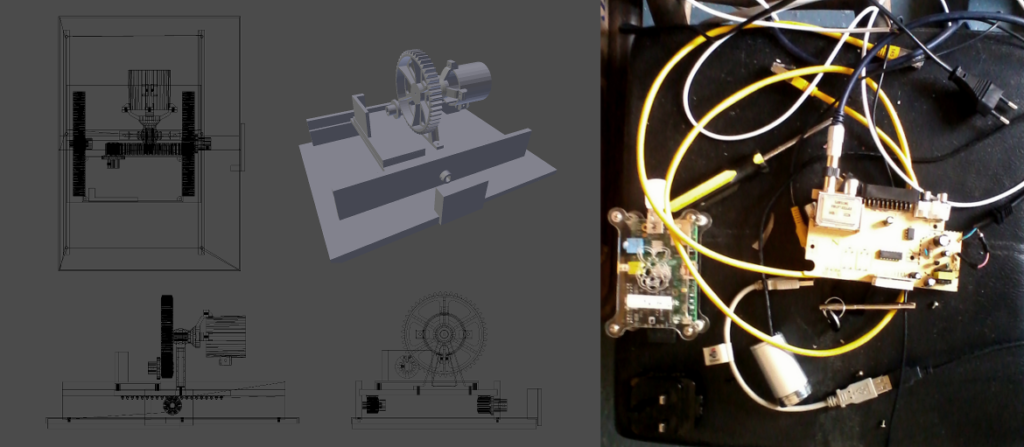

- Upgrade Raspberry Pi ver1 B to Raspberry Pi ver2 B

- Modify and print case for Raspberry Pi

Result:

- Smoother performance on video loop feedback

- Raspbian OS much more stable

- More memory and CPU power for audio synthesis

- Compact Rpi case protects fragile wires of motor control

Rpi Case:

Video shows smoother performance of video loop feedback with audio:

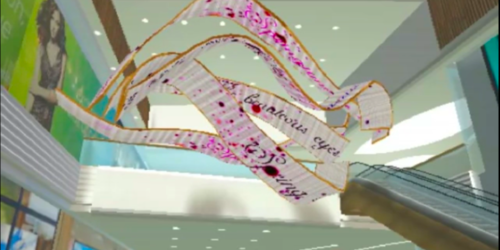

3D SBS Video of sculpture in action:

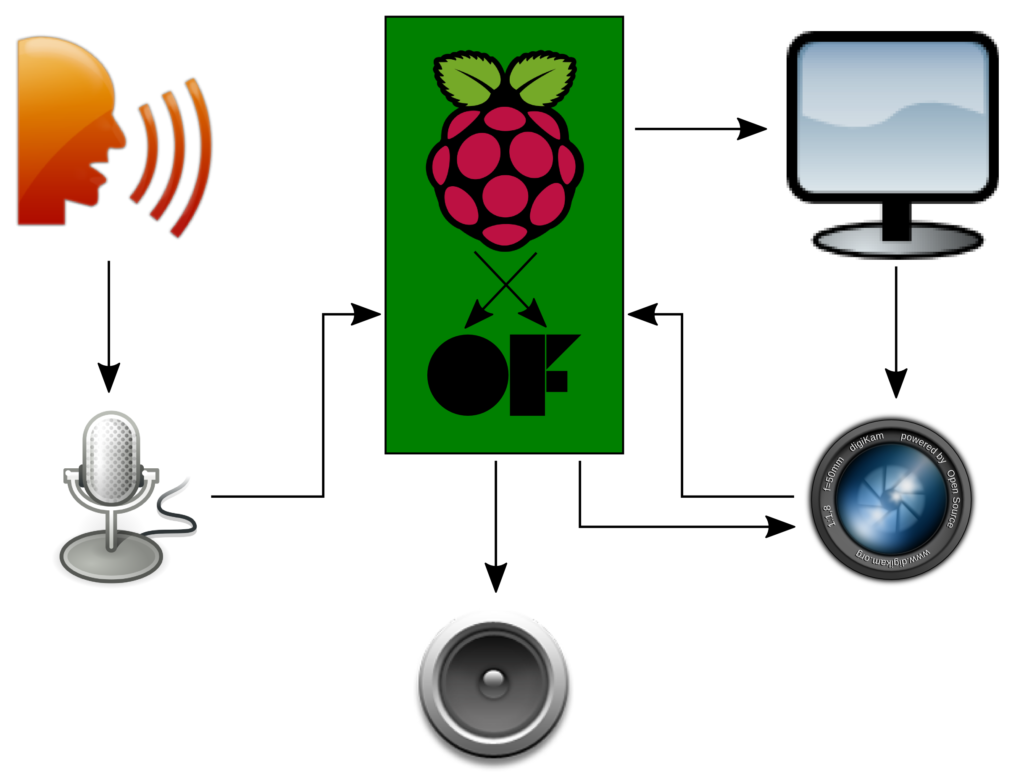

SOFTWARE

While watching appearing patterns in the sculptures real-time animation created by a video loop feedback, there are forms and shapes we will recognize as faces, bodies, landscapes, or man-made constructions. The interpretation relies on each personal ability to build connections to the seen and its emotional related memories. The sound is generated by the mean of the summary of each pixels gray scale value every time a new video image has been created.

Brainwave frequencies as beat

Beside the overall mean of grayscales in each new video frame, the software also calculates mean values of 5 ranges throughout the entire spectrum. Those means will trigger the dominant wave form representing the dominant active brain wave. The generated sound playing the frequency calculated by the overall mean of grayscale values, while triggering it between 0 and 100 times a second (0 - 100 Hz), depend which brain wave is dominant.

Camera movements triggered by external audio

An self image get altered by ones environment, especially in a younger age up around the age of 20. But it does not necessarily stop, though adults usually get used to the fact of being looked at or talked about. The sculptures camera system reacts when the sound in the environment reaches a threshold monitored by a volume meter. When the motor change the roll position and the distance between camera and TV, new images getting created by the internal video feedback loop.

Audience sampling

The threshold, that triggers the camera movements via motors also starts to records all noises responsible for the activation, which then gets mixed with the audio output of generated sound.

The main development has been done in openFrameworks using C++. The supporting addons are great to access libraries, that have been written by Artists and Programmer in order to support their projects, as well as projects of people from the OF community.

ofxMaxim has been used in this project to generate sound and mix it with recorded audio samples.

ofxReverb is an addon, that adds the illusion of a wide space to the generated sound.

ofxXml writes a file in xml format and saves range values in this file to save it to use changed parameters on restart automatically.

Finally, the gpioclass.h and gpioclass.ccp are files, that manage the access to GPIO pins of a Raspberry Pi using C++. The available ofxGPIO did not work on the Raspberry Pi, therefore I had to search for an alternative. These files need to be copied directly into the source folder and included within the openFrameworks project. I had to modify the files to get it work, which was fairly easy. There have been several control loops, that searched for the existing temporary files created in the GPIO folder on Linux. They do not exist any longer in newer distributions, so I simply commented out those parts of the code.

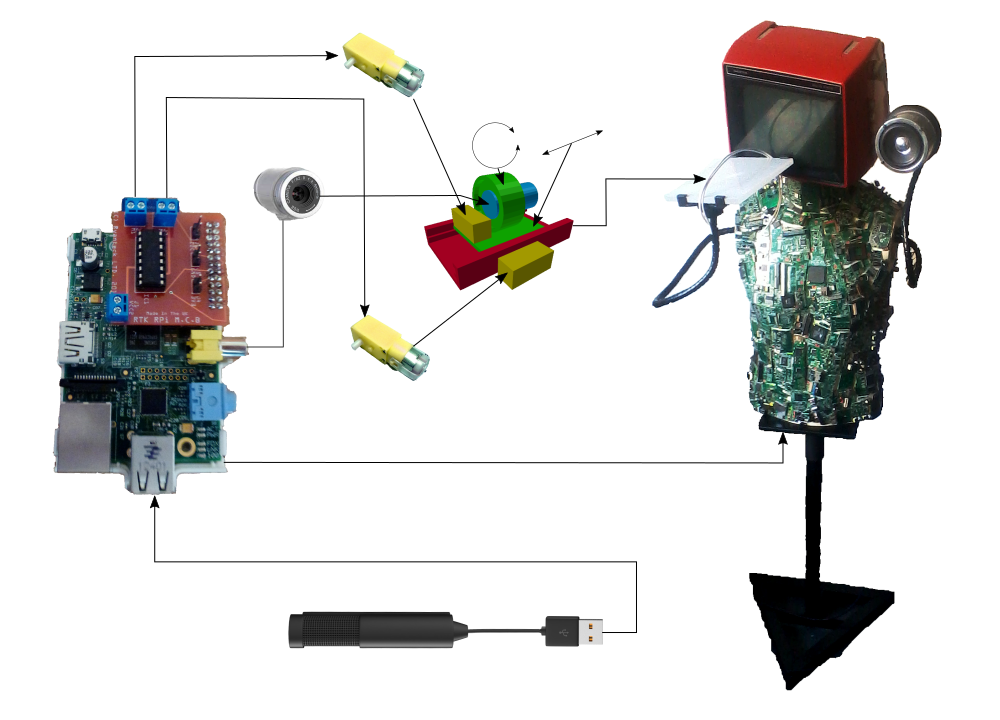

HARDWARE

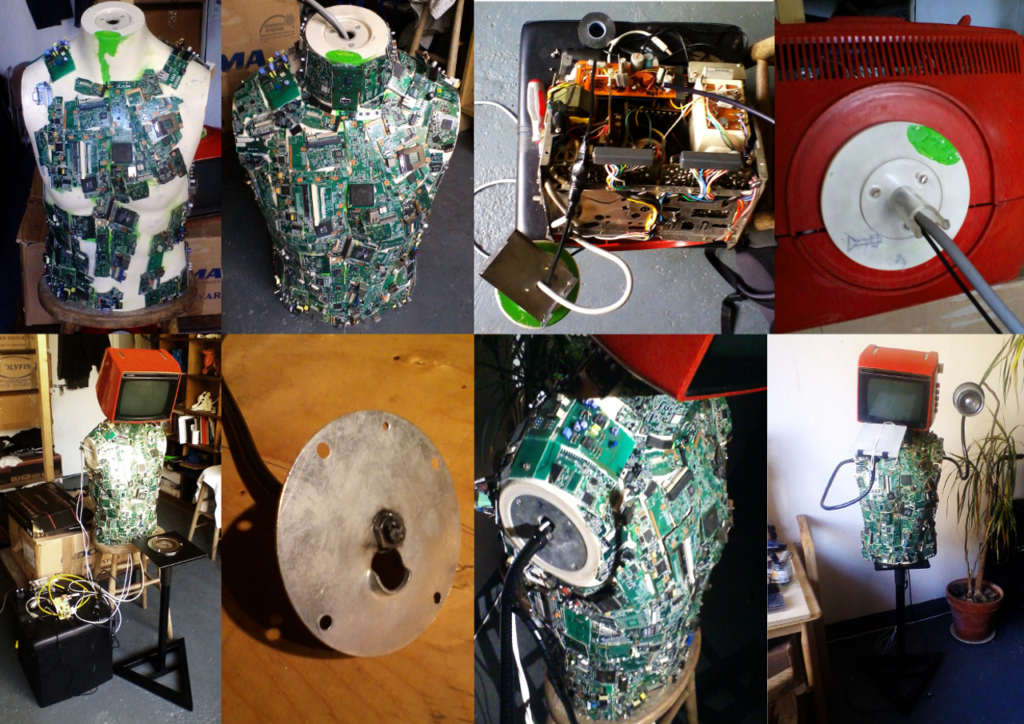

IterNoise has a male torso made from a shop window puppet, while covered in pieces of motherboards and peripheral cards. The red TV, representing the head is connected to a ADC device to get the analog video signal converted for the raspberry pi composite input. I choose the TV for its matching size and color, as experiences of paranoia can lead to hot head reactions. The red represents the intense brain activity. The use of broken motherboard pieces is not prioritized by meaning, but can referred to discussions to mental health of digital existence (e.g. Phantom pain of an artificial intelligence).

The torso is covered with broken motherboard pieces. Breaking boards and attaching them to the torso has been quite straight forward. Out of experience through creating my first sculpture alike, I knew what tools and materials to use. London Hackspace has been helpful with broken motherboards and cards to get enough material to cover the entire upper body of the sculpture. The parts are glued on using a hot clue gun, while black paint gave it a final touch.

The head is an old camper TV I bought on ebay. The TV did not work properly, so I had to fix it. An alternative would be a replacement with a new LCD screen, while keeping the TV case.

The arms are bendable iPad holders, that did not work for the purpose of being arms. The bolts could not be tightened enough to support the weight downwards. I had to get it welded from a car shop around the corner.

The speaker is built from an acrylic tube and an old PC speaker. The attached small amplifier has been added to get a decent sound volume. The needed power supply comes from a usb cable soldered and connected back to the Raspberry Pi.

The sculpture stand is originally a speaker stand, but was perfect in its design. The triangular shape of the foot symbolically can be seen as fire sign, while the red TV as well supports a visual representation of hot.

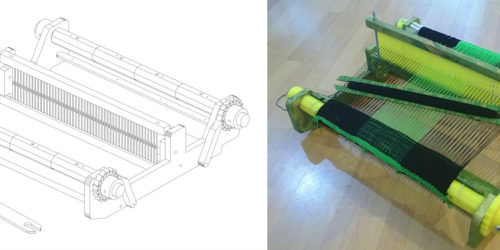

The camera mechanics has been modeled in Blender3D and printed with an Ultimaker Standard 3D printer, using black PLA filament. I had to use 2-3 percent scaling when exporting parts to the slicing software Cura to include material shrinking while cooling.

The Raspberry Pi has an installation of the OS Raspbian, a included real-time kernel, jack audio server and openFrameworks. The project is compiled directly on the Raspberry Pi controlled by a connected laptop (OS Linux Ubuntu Studio) via ssh script and Ethernet cable. This only is used in the development process. Later on, the Raspberry Pi will run autonomously, while starting the project file on startup. There is a switch installed, that will shutdown the computer.

BEFORE:

AFTER:

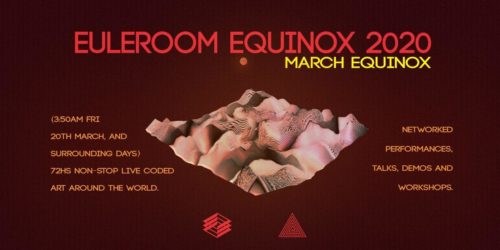

Final at the 2016 METASIS exhibition:

SOURCE CODE: